Artificial Intelligence and Human Rights

Consumer Privacy

Cybersecurity

Data Protection

Democracy & Free Speech

Open Government

Platform Accountability & Governance

Privacy Laws

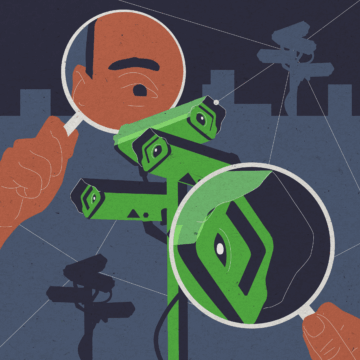

Surveillance Oversight

EPIC is a public interest research center in Washington, DC seeking to protect privacy, freedom of expression, and democratic values in the information age.

Join EPIC's Stop the Surveillance State Campaign to help us defend privacy and protect our democracy!

Learn More

Featured Analysis

The Spirit of DOGE Is Alive and Well in the House’s So-Called Fraud Prevention Bills

This week, the House of Representatives votes on three bills that would permanently formalize the DOGE playbook, risking the privacy and liberty of every American for the sake of unproven fraud prevention methods.

All blog postsOur Work

EPIC engages in policy research, amicus briefs, public education, litigation, publications, and advocacy to promote privacy in the digital age.

Explore our WorkExplore our past amicus briefs, testimony, litigation documents, and more in the Digital Library.

Access our Digital Library

EPIC Champions of Freedom 2026

Join us on September 22nd at the True Reformer Building for EPIC's 2026 Champions of Freedom event, focused on stopping the surveillance state.

22 Sep. 5:00 PM EDT

Good Luck Opting Out: Manipulative Design Patterns in Opt-Out Processes

Good Luck Opting Out investigates whether the processes provided by major online platforms use manipulative design patterns to make it more difficult for consumers to exercise their opt-out rights.

Join our Stop the Surveillance State Campaign

Commercial surveillance systems have become systems of government control, and they are threatening the foundations of our democracy. Join our campaign to stop the surveillance state!

Learn More